Innovation Funnel: U.S. Air Force

Increased Innovation Advancement Rate 22% (turning a noise-generating intake into a signal-producing pipeline), reduced off-ramping 6% (protecting contributor engagement), and drove 3x faster decision velocity (compressing months of committee review into weeks).

+22%

Innovation Advancement Rate

Innovation Funnel: U.S. Air Force — System Diagram

The Problem

The U.S. Air Force's innovation program had three compounding failures. First, it lacked a structured lifecycle for advancing ideas: submissions disappeared into the pipeline without feedback, creating a suggestion-box dynamic that killed contributor trust. Second, the program operated in tribal silos: Airmen doing similar work across disparate bases had no mechanism to share intelligence, so the same problems were being solved independently in isolation. Third -- and most revealing -- the program had a hidden capacity problem: leadership asked for more submissions, and when volume increased, they were overwhelmed and unable to deliver the quality feedback that would have made the program credible. The result was stalled initiatives, declining engagement, and an innovation culture paralyzed by the fear of failure. The word 'Fail' was ending conversations that should have been starting them.

Productable was building an innovation management platform, and the U.S. Air Force was one of its most demanding clients. The Air Force's innovation program had a real problem: it could generate ideas, but it couldn't systematically advance them. The submission process was clear, but the evaluation and advancement process was opaque -- contributors didn't know what happened to their ideas after submission, and program managers didn't have a reliable framework for deciding which ideas to advance.

The result was a pipeline that looked full but wasn't moving. Ideas accumulated at each stage without clear advancement criteria. Contributors who submitted ideas and heard nothing back stopped submitting. Program managers who lacked a structured evaluation framework defaulted to advancing ideas based on familiarity rather than merit. The innovation program was generating activity without generating outcomes.

The tribal silo problem compounded everything. Airmen at different bases were working on identical challenges -- logistics inefficiencies, maintenance workflow gaps, communication breakdowns -- without any mechanism to connect them. The same problem was being solved six times over in six different locations, with no cross-pollination of what worked. The institutional knowledge was there; the connective tissue was missing.

The language problem was equally damaging. When an idea didn't advance, the system called it a failure. "Fail" ends a conversation. It signals to the contributor that their judgment was wrong, their effort was wasted, and future submissions carry the same risk. The psychological safety required for innovation -- the willingness to propose something that might not work -- was being systematically destroyed by a single word.

My insight was to treat the innovation pipeline as a decision system, and to redesign both the language and the architecture simultaneously. I replaced "Pass/Fail" with "Graduate/Remediate": Graduate means the idea advances; Remediate means the idea needs more development, and here is exactly what it needs. Remediate invites collaboration. It tells the contributor: your idea has merit, and I want to help you make it stronger. This single language change had a measurable impact on re-submission rates.

The Echo System addressed the tribal silo problem. By connecting Airmen in similar roles across disparate bases -- creating structured channels for cross-pollinated knowledge sharing -- I built the connective tissue that transformed isolated problem-solvers into a networked intelligence ecosystem. The same insight that solved a logistics problem at one base could now be surfaced to the Airman facing the same challenge at another base within days rather than years.

But the most instructive lessons from this engagement came from the feedback research -- and they were not what anyone expected.

Leadership told us they wanted more submissions. We took that at face value and optimized for volume. Submission rates increased. Then the real problem surfaced: leadership was overwhelmed. They didn't have the bandwidth to review the increased volume with the depth and quality that would make feedback meaningful to contributors. The ask for "more submissions" was actually an ask for a better signal-to-noise ratio -- for a pipeline that surfaced the right ideas, not more ideas. The lesson: when a stakeholder asks for more of something, the real requirement is almost always better quality of that thing. Volume without evaluation capacity is not a feature; it is a liability.

The contributor feedback was equally revealing. Airmen told us they needed a mobile application -- they were in the field, not at a desk, and the submission experience was built for a desktop environment that didn't match their operational reality. They needed audible capture: the ability to dictate ideas and observations in the moment, before the insight was lost. They needed an AI compilation engine that could take their raw voice notes and transform them into structured, reviewable submissions with feedback, insights, and guidance built in. And they were managing their innovations across too many tools: multiple entry points, fragmented tracking, no single surface where they could see the status of everything they had submitted or were working on.

We ran several design exercises to address these needs. They all failed. The reason was not execution -- it was scope. We were designing in a vacuum, assuming Productable was the only tool in the ecosystem. We hadn't addressed the elephant in the room: how and where did our platform fit and play with the other innovation management platforms already in use? The Air Force's innovation ecosystem was not a blank slate. There were existing tools, existing workflows, and existing integrations. Until we answered the ecosystem positioning question -- what is Productable's specific role, and what does it hand off to adjacent platforms -- every feature we built was solving the wrong problem.

The ecosystem positioning work became the foundation for the roadmap. Once we defined Productable's lane -- the submission, evaluation, and advancement layer -- we could design the mobile capture and AI compilation features as inputs to that lane, not as replacements for the broader ecosystem. The tool fragmentation problem was solved not by building everything, but by defining the handoffs clearly.

What I Built

A stage-gated innovation lifecycle with Graduate/Remediate language replacing Pass/Fail, structured submission and evaluation workflows, and the Echo System: a cross-base knowledge sharing architecture that connected Airmen in similar roles across disparate entities. I redesigned the contributor experience to surface decision criteria clearly, introduced recurring engagement mechanisms to sustain participation, and built the feedback loops that closed the contributor-to-decision gap. I also led the ecosystem positioning work that defined Productable's specific role within the broader innovation tool landscape -- the prerequisite that made every subsequent feature decision coherent.

Key Actions

I redesigned the innovation submission experience to structure inputs earlier, reducing low-quality submissions that were causing off-ramping

I defined clear stage-gate criteria for each advancement decision, making the evaluation process transparent and consistent

I built contributor feedback loops that communicated advancement decisions with rationale, increasing re-submission rates

I introduced recurring engagement mechanisms -- weekly digests, milestone notifications, peer voting -- to sustain participation over time

Instrumented the pipeline to track advancement rates, off-ramping points, and contributor retention by cohort

Conducted leadership feedback research that revealed the volume paradox: the ask for 'more submissions' was actually an ask for better signal-to-noise ratio, not higher raw count

Conducted contributor feedback research that surfaced four unmet needs: mobile capture, audible dictation with AI compilation, AI-driven guidance on submissions, and a unified tracking surface to replace fragmented multi-tool workflows

Led ecosystem positioning workshops to define Productable's specific role within the broader innovation tool landscape -- the prerequisite that resolved the design vacuum problem and made the mobile and AI feature roadmap coherent

Key Business Impact

Innovation Advancement Rate increased 22%. Off-ramping reduced 6%. Decision velocity increased 3x. Contributor re-submission rates improved. Program managers reported higher confidence in advancement decisions. The pipeline began generating outcomes rather than just activity.

An innovation program without a decision framework is a suggestion box. The stage-gated lifecycle transformed the Air Force's program from a collection of ideas into a system that consistently identified the ideas worth pursuing. The Graduate/Remediate language change protected the psychological safety required for innovation. The Echo System turned isolated problem-solvers into a networked intelligence ecosystem. The 22% advancement rate increase and 3x decision velocity improvement are the measurable outcomes of replacing intuition with structure -- and replacing 'Fail' with 'Remediate.' The leadership paradox -- asking for more submissions and then being overwhelmed by them -- is a product management lesson that transcends this engagement: stakeholder requests are rarely literal. The contributor research exposed a product-market fit gap that no amount of feature work could close until the ecosystem positioning question was answered first. Both lessons are transferable to any platform where multiple stakeholders have conflicting definitions of success.

If we didn't fix this

The innovation pipeline would have continued accumulating stalled initiatives with no structured mechanism for advancement or termination.

Contributor engagement would have continued declining as ideas disappeared into the pipeline without feedback.

Program managers would have continued advancing ideas based on familiarity rather than merit, undermining the program's credibility.

The volume paradox would have compounded: more submissions without evaluation capacity would have further degraded feedback quality, accelerating contributor disengagement.

Contributors in the field would have continued losing ideas at the moment of insight -- no mobile capture, no dictation, no AI compilation -- because the submission experience was designed for a desktop environment that didn't match their operational reality.

The tool fragmentation problem would have worsened with every new feature built: without ecosystem positioning, each new capability would have added another entry point to a workflow that was already too complex.

System Design Insight

I fixed four things in sequence: the language (Graduate/Remediate instead of Pass/Fail), the architecture (stage-gated lifecycle with clear criteria), the network (Echo System connecting Airmen across bases), and the ecosystem positioning (defining Productable's lane before building features). Language change is the fastest lever for cultural transformation -- it costs nothing and changes everything. The architectural change made decisions transparent. The network change made knowledge compound across the organization instead of staying siloed within it. The ecosystem positioning work was the most underestimated: every design exercise failed until we stopped assuming Productable was the only tool in the room. Once we defined the handoffs, the mobile and AI roadmap became straightforward.

How to Talk About This

"'Fail' ends a conversation. 'Remediate' starts one. That single language change had a measurable impact on re-submission rates"

"The Echo System turned isolated problem-solvers into a networked intelligence ecosystem -- the same insight could now travel across bases in days instead of years"

"Leadership asked for more submissions. We gave them more submissions. They were overwhelmed. The real ask was for better signal, not more noise"

"Contributors needed to capture ideas in the field, not at a desk. Audible capture and AI compilation weren't nice-to-haves -- they were the difference between capturing an insight and losing it forever"

"Every design exercise failed until we asked the question we'd been avoiding: where does Productable fit in the ecosystem? You can't design a feature set for a platform whose lane you haven't defined"

"I fixed the language first, then the architecture, then the network, then the ecosystem positioning. In that order, because each layer was the prerequisite for the next"

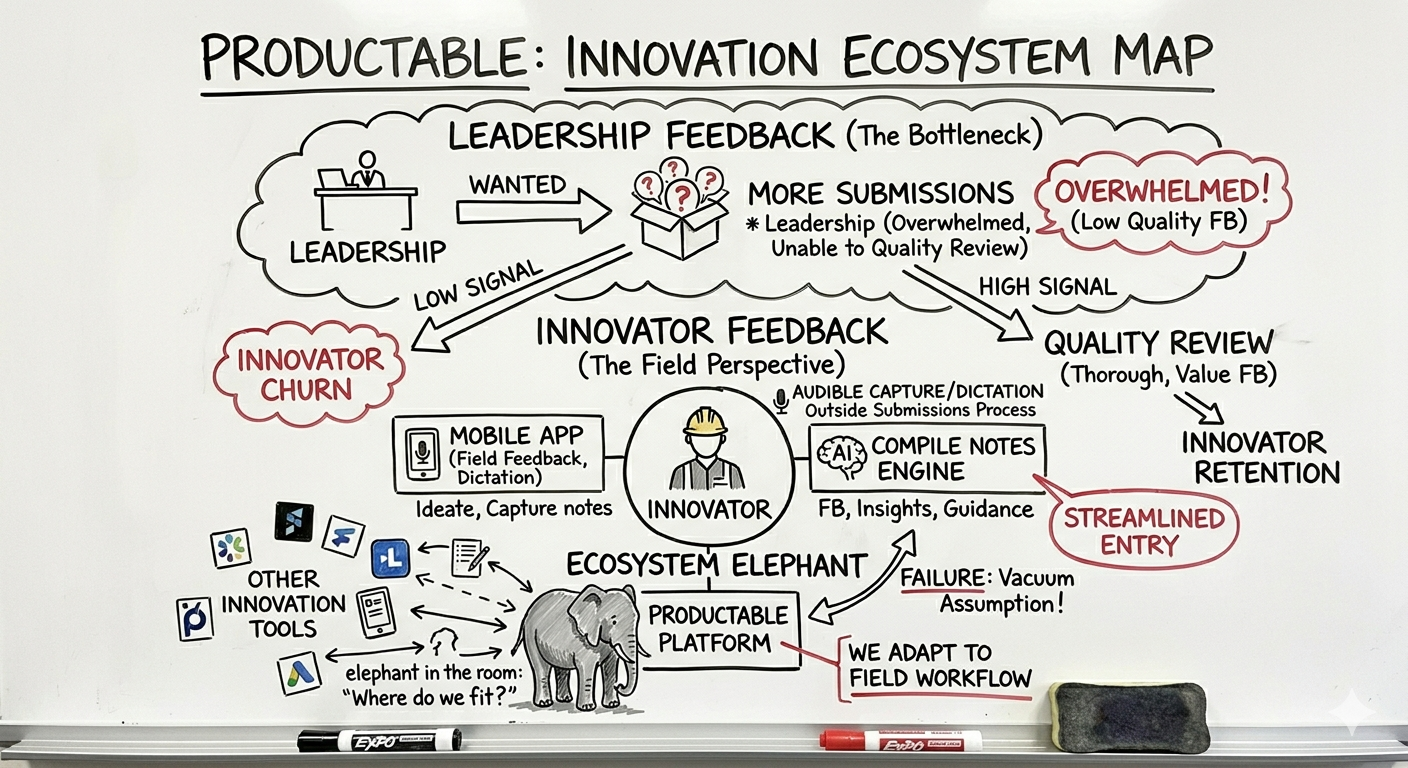

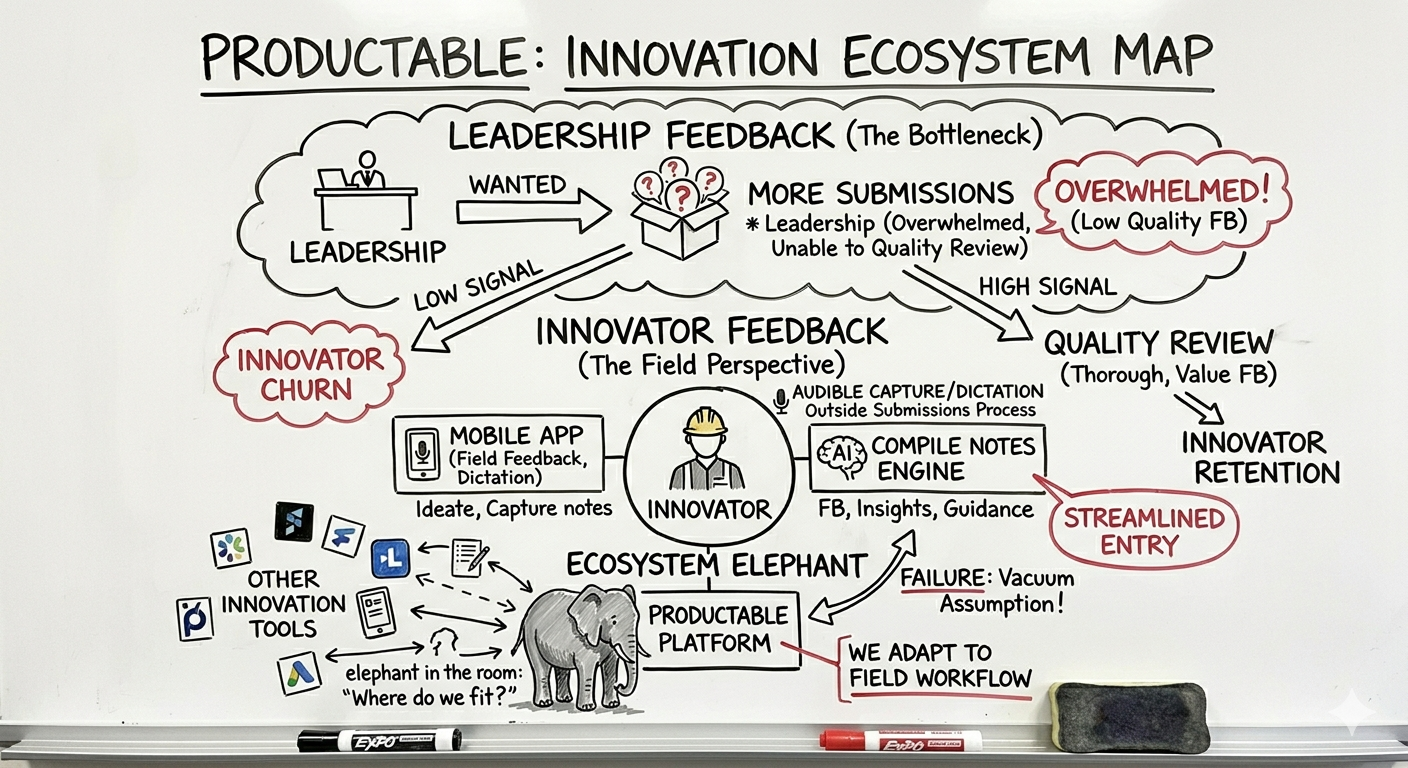

Visual Artifact

Innovation Ecosystem Map

A whiteboard session that mapped the full feedback architecture: the leadership bottleneck (volume without evaluation capacity), the innovator's field needs (mobile capture, audible dictation, AI compilation), and the ecosystem elephant -- the unanswered question of where Productable fit within the broader innovation tool landscape.

Leadership Feedback

The Bottleneck

More submissions → overwhelmed reviewers → low-quality feedback → innovator churn. The ask for volume was actually an ask for signal.

Innovator Feedback

The Field Perspective

Mobile app, audible capture, AI compilation engine, streamlined entry. Four unmet needs that no desktop-first platform could address.

Ecosystem Elephant

The Vacuum Assumption

Every design exercise failed until we asked: where does Productable fit? Defining the lane made the entire roadmap coherent.

Research & Evidence

What the data says

“95% of new products introduced each year fail, according to Clayton Christensen. Corporate innovation programs face an 88% failure rate -- the majority failing not from lack of ideas, but from lack of structured evaluation and feedback.”

The Productable program's failure mode was not idea scarcity -- it was evaluation capacity. Leadership wanted more submissions but couldn't review them thoroughly. The volume paradox is the operational manifestation of the 88% failure rate: programs that optimize for input rather than throughput collapse under their own weight.

Source“Innovation decisions are 2.5 times more likely to fail than typical business decisions. Top companies succeed 2.5x more often by applying structured decision frameworks rather than relying on intuition.”

The stage-gated lifecycle and Graduate/Remediate model replaced intuition-based advancement with a structured decision framework. The 22% improvement in innovation advancement rate reflects what happens when evaluation criteria are explicit, consistent, and applied at every stage.

Source“Psychological safety is the hidden engine behind innovation. Teams with high psychological safety are significantly more likely to contribute ideas, report problems, and experiment -- the behaviors that drive innovation program success.”

The shift from Pass/Fail to Graduate/Remediate was a psychological safety intervention. 'Fail' ends the conversation and signals that the contributor's judgment was wrong. 'Remediate' tells the contributor their idea has merit and invites collaboration -- the exact condition psychological safety research identifies as the driver of sustained innovation engagement.

Source“97% of companies say good user onboarding is necessary for effective product growth. The same principles -- clarity, progression, quick wins -- apply to innovation pipeline design.”

The innovation funnel's submission and review process is, in effect, an onboarding experience for ideas. Contributors who receive clear, structured feedback early in the process are significantly more likely to resubmit and remain engaged -- the same dynamic that drives product onboarding retention.

SourceWhite Paper Thread: The Decision Layer

The innovation funnel case study demonstrates that the decision layer pattern applies beyond product platforms to organizational processes. The stage-gated lifecycle is a decision system: it takes ideas as inputs, applies evaluation criteria at each stage, and produces advancement decisions as outputs. Building that system with clear inputs, consistent processes, and sustained engagement is the same architectural challenge as building a product decision layer.

Read the White Paper →Connective Tissue

How this connects to the larger system

Funnel Conversion and A/B Testing: USAA

Both cases address funnel optimization through structured decision frameworks. The innovation funnel uses stage-gated evaluation; the USAA funnel uses A/B testing. Both are systematic approaches to improving decision quality at each stage of a conversion process.

Read case study

Onboarding and Direct Deposit: USAA

Both cases use recurring engagement models to sustain behavior over time. The innovation funnel's structured cadences and the USAA onboarding journey's 180 interactions in 30 days are the same pattern: designed touchpoints that build habits.

Read case study

The Operating System

A System of Systems

ibuildsystems.io

Four frameworks. One repeatable system. Applied across banking, fintech, government, and B2B SaaS to turn broken workflows into scalable revenue engines.